What Is Physical AI : If you have been reading about the latest breakthroughs in technology, you have likely come across the term Physical AI. This exciting field is moving artificial intelligence out of computer screens and into the physical world around us. In 2026, Physical AI is no longer just a concept discussed in labs. It is actively working inside advanced robots that operate in factories, warehouses, hospitals, and even early home settings across the United States.

Physical AI gives machines the ability to understand their surroundings, make smart decisions, and perform useful actions in the real world. Unlike regular AI that only processes data or generates text, Physical AI connects intelligence directly with physical bodies. This guide explains everything in simple, practical terms so you can clearly understand how it works and why it matters for American workers, businesses, and families. By the end, you will have real knowledge you can use to stay informed about this fast-growing technology.

The best part is that Physical AI is already solving real problems like labor shortages in manufacturing and logistics. Companies such as Tesla, Boston Dynamics, and Figure AI are leading the way, creating robots that work safely alongside humans. Let’s dive deep into what Physical AI really is and how it functions inside today’s robots.

Also Read : How to Build Multi-Agent AI Systems Using Open Source Tools Like CrewAI and AutoGen in 2026

What Is Physical AI?

Physical AI refers to artificial intelligence systems that are designed to interact with the physical world through a body or machine. It combines sensing, thinking, and acting in one continuous process. The robot gathers information from its environment using cameras, touch sensors, and other tools. Then its AI brain processes that information and decides the best action. Finally, motors and joints carry out the movement.

This sense-think-act loop happens constantly and quickly. That is what makes Physical AI different from everything that came before it. A Physical AI robot does not just follow fixed instructions. It can adjust in real time when something unexpected happens, such as a box shifting position or a tool slipping from its grip.

For everyday Americans, this means robots that are flexible enough to work in real factories and warehouses instead of only in perfect laboratory conditions. Physical AI is helping bring manufacturing jobs back to the U.S. by making automation more capable and practical than ever before.

The technology builds on years of progress in machine learning, computer vision, and robotics hardware. Today’s systems use powerful models trained on huge amounts of video and sensor data. This training helps robots understand physics, gravity, textures, and object behavior in ways that feel almost natural.

How Physical AI Is Different from Traditional Robots and Digital AI

Traditional industrial robots have been used in car factories for many years. They are very good at repeating the same exact movement thousands of times. However, they fail completely if even one small thing changes in their environment. They need perfectly controlled settings and detailed programming for every single task.

Digital AI, like the systems behind ChatGPT or image generators, works only with information and language. It cannot touch, move, or feel anything in the physical world. Physical AI successfully brings these two worlds together. It adds real-world awareness, physics understanding, and adaptive control to create machines that can handle messy, unpredictable situations.

This difference is huge for practical use. A Physical AI-powered robot can walk into a normal warehouse with boxes stacked unevenly and still complete its job. It learns from experience and gets better over time, just like a human worker does after gaining more practice on the job.

The breakthrough came with new types of AI models called vision-language-action models. These models allow a robot to watch a human demonstrate a task once and then attempt it themselves. They adjust their movements based on what they see and feel in the moment. This is why 2026 robots look and behave much more intelligently than models from just a few years ago.

Core Components That Make Physical AI Work in Real Robots

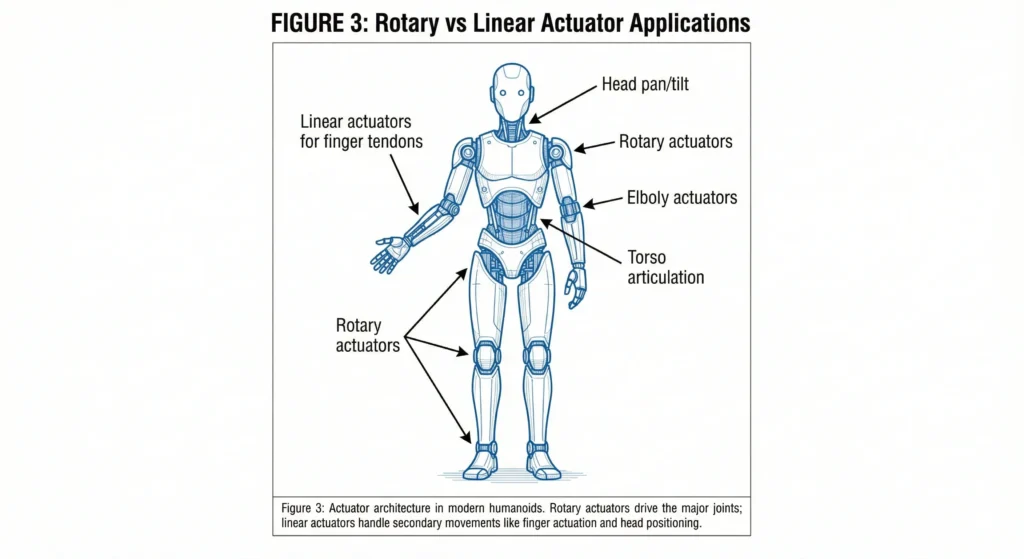

Every successful Physical AI robot depends on three main parts working perfectly together: advanced sensors, a smart AI brain, and powerful actuators.

Sensors act as the robot’s eyes, ears, and skin. High-quality cameras capture detailed images from multiple angles. Lidar creates accurate 3D maps of the surroundings. Tactile sensors in the hands and fingers detect pressure, texture, and slip. All this information is processed right on the robot so decisions can be made instantly without waiting for cloud servers.

The AI brain serves as the thinking center. Modern Physical AI uses large foundation models that understand both visual information and physical laws. These models are first trained heavily in detailed computer simulations. Later they are fine-tuned with real-world data collected from actual robots. This process gives the robot the ability to predict how objects will behave when pushed, pulled, or picked up.

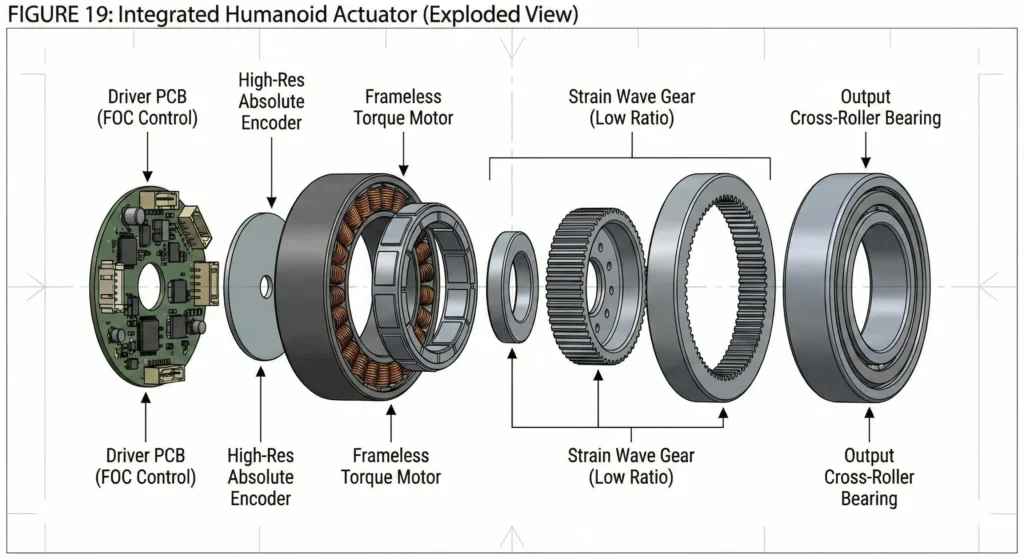

Actuators are the muscles of the robot. They include electric motors, precision gears, and joint systems that create smooth, human-like movements. The latest designs provide excellent force control, allowing the robot to handle delicate items without crushing them or dropping heavy ones. Together, these three components create a complete system capable of useful work in the real world.

How Physical AI Robots Are Trained for Real-World Performance

Training a Physical AI robot is a careful, step-by-step process that mixes simulation and actual practice. Companies begin with powerful simulation software where thousands of virtual robots can practice the same task millions of times. This helps the AI learn basic skills safely and quickly without risking expensive hardware.

After building strong foundations in simulation, the robot moves to real-world testing. Human operators often control the robot remotely at first to demonstrate correct movements. The AI carefully studies these demonstrations and turns them into general skills it can use in different situations. This method is called imitation learning and has proven very effective.

As the robot practices more on its own, it uses reinforcement learning to improve. Every success and failure is recorded through its sensors. The system gradually gets better at handling variations like different lighting, slippery surfaces, or slightly different object shapes. Some companies even let their robots share learned experiences across entire fleets, making the whole group smarter much faster.

This practical training approach means modern robots become useful much quicker than older systems. American factories are already seeing reduced training time and faster deployment of new automation.

Leading Real-World Examples of Physical AI Robots in 2026

Tesla’s Optimus humanoid is one of the most talked-about examples. These robots are already working inside Tesla factories, handling battery cells and moving parts efficiently. With highly dexterous hands and advanced vision systems, Optimus can perform delicate assembly tasks that previously needed human precision. Tesla’s plan to produce them at large scale shows how Physical AI is moving from research to real production.

Boston Dynamics continues to impress with its Electric Atlas robot. This machine is known for its excellent mobility and balance. It can walk on uneven surfaces, climb steps, and work in tight industrial spaces. The robot uses sophisticated tactile sensing in its hands to feel whether parts are properly aligned or if more force is needed. Its smooth movements and adaptability make it valuable for many manufacturing and logistics jobs.

Figure AI’s Figure 03 model is gaining attention for its strong focus on safe collaboration with humans. These robots are being tested in real automotive plants where they load parts and assist workers. Their balanced design and reliable performance show how Physical AI can create machines suitable for both factory floors and future home environments. All three examples prove that Physical AI is delivering practical benefits right now.

Practical Applications of Physical AI Across American Industries

Manufacturing and logistics are seeing the biggest immediate benefits. Physical AI robots unload trucks, sort packages, and handle irregular items that older systems could not manage. This increases speed and reduces worker fatigue in physically demanding jobs. Companies report higher output and fewer errors in warehouses.

In healthcare, Physical AI improves surgical robots by adding better real-time adjustments and steadier movements. Rehabilitation exoskeletons use adaptive AI to match each patient’s unique walking pattern, helping people recover faster after injuries or surgeries. These applications directly improve patient outcomes and reduce strain on medical staff.

Agriculture and home care are also growing areas. Autonomous machines navigate fields and perform precise planting or harvesting. Early home robots can help with chores and support elderly family members. For many American households, this technology could provide meaningful assistance with daily tasks while maintaining dignity and independence.

Major Challenges Still Facing Physical AI Development

Despite impressive progress, Physical AI still faces important challenges. The gap between perfect simulation results and messy real-world conditions remains a key issue. Dust, changing light, or unexpected obstacles can still cause problems that engineers continue to solve.

Safety is another critical concern. Large, powerful robots must be extremely reliable when working near humans. Strict testing and new safety standards from organizations like OSHA are being developed to protect workers. Battery technology and maintenance costs also need further improvement before robots can run nonstop for long periods.

Many people worry about job impacts. The practical reality is that Physical AI often takes over dangerous, repetitive, or exhausting tasks. This allows human workers to focus on more creative, supervisory, and problem-solving roles. Good training programs and smart policies will help American workers benefit from these changes instead of being left behind.

The Future of Physical AI and Real-World Robots

Looking ahead to the next few years, experts expect costs to drop significantly as production scales up. More advanced edge computing and better materials will make robots faster, lighter, and more energy efficient. Networks that let robots share knowledge across different locations could speed up learning dramatically.

For the United States, Physical AI offers a real opportunity to strengthen domestic manufacturing and shorten supply chains. New jobs will appear in robot maintenance, programming, and system oversight. States with strong industrial bases are likely to see fresh economic growth from this technology.

Why Understanding Physical AI Matters for You Today

Physical AI represents a major step forward in robotics. It is turning machines into capable partners that can sense, think, and act in our complex world. From factory floors to potential home use, the technology is already creating measurable improvements in productivity, safety, and quality of life.

The robots powered by Physical AI in 2026 are practical tools, not science fiction. They are helping solve real labor challenges while creating new opportunities for American businesses and workers. Taking time to learn about this technology now will help you understand the changes happening around you and prepare for the future.

Whether you are a business owner considering automation, a worker wanting to develop new skills, or simply someone interested in technology, Physical AI is a topic worth following closely. The intelligence that once lived only in computers is now stepping into the physical world, and it is changing how we live and work in meaningful ways.

Mahi

Hello friends! My name is Mahi and I am the Founder & Writer of Studynumberone.Net. I write content related to Ai, Technology, Make Money, and Education. Hope you like my Articles!

How to Deploy Low-Power AI Models on Edge IoT Devices ?

How to Deploy Low-Power AI Models on Edge IoT Devices : In our increasingly connected world, IoT devices…

Read MoreBrain-Computer Interface (BCI) Everyday Use Cases: Practical Neuralink Alternatives for Real Life in 2026

Brain-Computer Interface : Hey folks, let me paint a picture for you. Picture sitting on your couch after…

Read MoreBest Open-Source AI-Native Development Platforms in 2026: How They Fully Automate Software Development with AI

Open-Source AI-Native Development Platforms: Hey there, if you’re a developer, startup founder, or engineering leader in the US…

Read MoreHow to Integrate AI Agents with Existing CRM and ERP Systems: A Practical Guide to Unlocking Agentic AI’s Real-World Power

How to Integrate AI Agents : If you run a business in the United States today—whether it’s a…

Read More