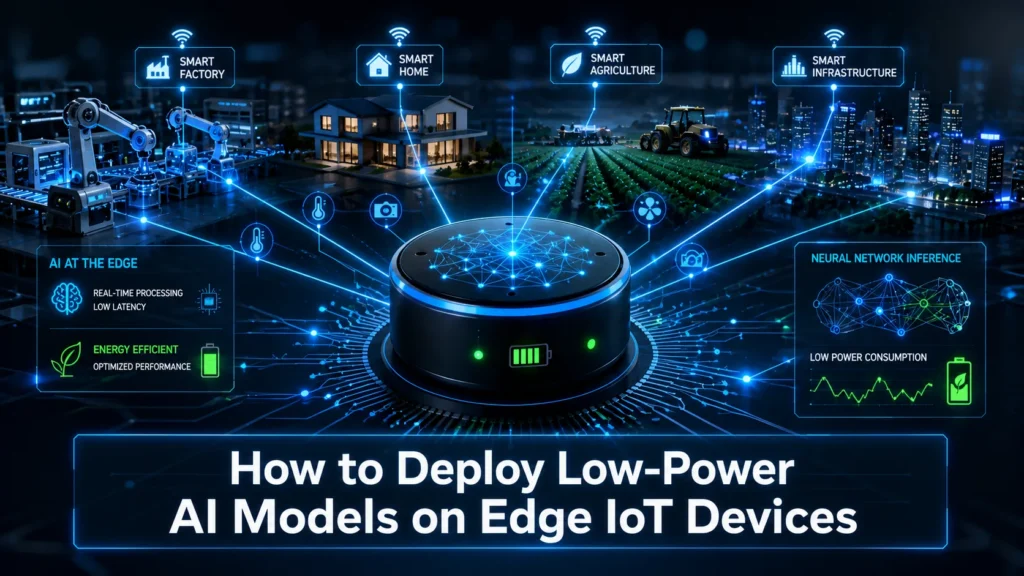

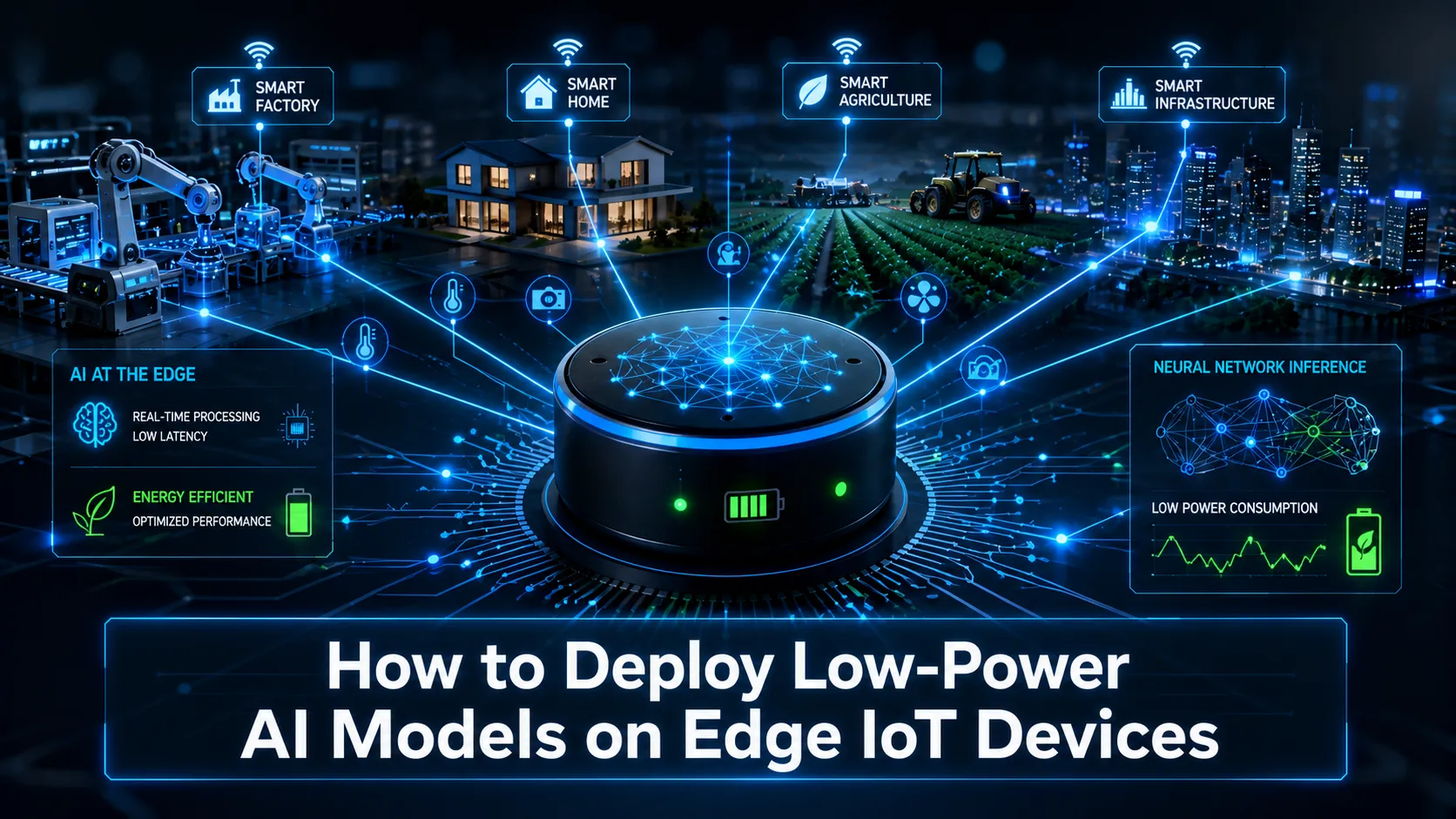

How to Deploy Low-Power AI Models on Edge IoT Devices : In our increasingly connected world, IoT devices surround us in homes, factories, farms, and cities. Traditional cloud processing works for some tasks, but it often creates delays, raises privacy concerns, racks up data costs, and fails when networks drop. Edge AI solves these issues by letting devices analyze data right where they collect it.

For battery-powered or energy-limited IoT gadgets, running heavy AI models simply doesn’t work. That’s why low-power models, frequently called TinyML, matter so much. They bring real intelligence to tiny hardware while using very little electricity. This guide explains the entire process clearly, as if we are working through it together in a workshop.

You will learn practical steps to pick hardware, shrink models effectively, install them successfully, and keep systems running smoothly for months in real conditions. Whether you build prototypes or scale commercial products, these insights help create reliable solutions that perform daily without constant maintenance.

Why Edge AI Makes Sense for Modern IoT Systems

Edge AI means the device itself handles predictions and decisions instead of sending raw data to distant servers. A small sensor can spot machine problems through vibrations, recognize simple voice commands, or track environmental changes instantly.

This approach cuts response time to almost nothing, which proves essential for safety systems or autonomous equipment. It protects user privacy by keeping sensitive information local and reduces network traffic dramatically. Only important alerts or summaries travel across the internet, saving money and improving reliability in remote areas.

Low-power models become necessary because most IoT hardware runs on tight limits—small memory, limited storage, and strict energy budgets. Standard AI models would drain batteries in hours or simply refuse to load. Careful optimization makes intelligent features possible without sacrificing core performance for everyday tasks.

Also Read : Brain-Computer Interface (BCI) Everyday Use Cases: Practical Neuralink Alternatives for Real Life in 2026

The Real Importance of Focusing on Low-Power Models

Energy use decides whether a device survives weeks or fails after days in the field. Remote sensors in agriculture or infrastructure monitoring cannot rely on frequent battery changes. Optimized models extend operation time significantly while keeping costs low.

Beyond batteries, lower power means less heat buildup, which helps devices last longer in tough environments like factories or outdoor installations. It also allows manufacturers to use more affordable chips. For businesses in competitive markets, this efficiency translates into better margins and greener products that appeal to customers.

Poor optimization causes unstable performance, unexpected shutdowns, and disappointed users. Success comes from balancing accuracy with speed and energy needs. Always define your minimum requirements first before building anything.

Common Obstacles When Putting AI on Constrained IoT Hardware

Memory and processing limits create the biggest headaches. Many microcontrollers offer only a few hundred kilobytes of RAM, far less than what typical neural networks demand. Models easily overflow available space and crash during operation.

Power management adds another layer of difficulty. Devices must stay in deep sleep most of the time and wake efficiently only when needed. Environmental noise, temperature changes, and sensor variations affect real-world accuracy. Models that shine in clean lab tests often struggle outside.

Security concerns grow because physical access to edge devices makes them vulnerable. Updates become tricky without reliable connections. Planning for these issues from the beginning prevents expensive redesigns later.

Selecting Suitable Hardware for Your Edge AI Project

Start with simple microcontroller boards for basic projects. Options like the Arduino Nano 33 BLE Sense or various ESP32 modules work well for audio, motion, or basic sensor tasks. They include useful built-in sensors and support popular optimization tools while offering excellent battery life.

When your application needs vision or faster processing, consider boards with dedicated accelerators. NVIDIA Jetson series provides more muscle for complex workloads, though they consume additional power. Match the hardware closely to your actual needs rather than choosing the most powerful option available.

Evaluate processor type, available memory, power modes, and community support before purchasing. Test power draw early using simple measurement tools. Affordable development boards help you experiment quickly before committing to custom designs for production.

Core Techniques to Optimize Models for Low Power Use

Quantization lowers the precision of numbers inside the model, changing from 32-bit floating points to 8-bit integers. This step often reduces size four times or more, speeds calculations, and saves substantial energy with only small accuracy trade-offs when done properly.

Pruning cuts away connections or neurons that contribute little to final results. You remove unnecessary parts and then fine-tune the remaining network. Combining pruning with quantization delivers impressive compression while keeping the model functional for real tasks.

Knowledge distillation trains a compact model to copy the behavior of a larger, more accurate one. This method transfers useful knowledge efficiently. Test every change thoroughly, measuring memory usage, speed, and actual power consumption in your target hardware.

Helpful Frameworks and Tools for Edge Deployments

TensorFlow Lite Micro works exceptionally well for microcontrollers. It offers a small interpreter designed for integer operations and runs without needing a full operating system. Convert trained models into compact formats and embed them directly into firmware.

Platforms like Edge Impulse simplify the entire workflow from data gathering to final deployment. They provide optimization suggestions and performance reports tailored to specific boards. ONNX helps move models between different training frameworks smoothly.

Pick tools based on your hardware and team skills. Begin with user-friendly options to build momentum, then customize further as your project grows. Good documentation and examples speed up development considerably.

How to Deploy Low-Power AI Models on Edge IoT Devices Detailed Step-by-Step Deployment Process

First, clearly define your problem and gather relevant data from actual sensors in real conditions. Include variations in lighting, temperature, and noise. Clean and label this data thoughtfully since quality directly impacts results.

Next, train a compact base model on a capable computer. Apply optimization steps like quantization and pruning, then evaluate multiple versions. Focus on metrics that matter for your use case, not just top accuracy scores.

Convert the model, integrate it with device code, and implement smart power management like interrupt-based waking. Flash the firmware, run thorough tests in the lab, then move to field trials. Monitor performance over time and prepare methods for future updates.

Learning from Practical Examples and Following Best Practices

Industrial sensors use these models to detect unusual vibrations and send alerts only when necessary. This saves energy while preventing costly breakdowns. In farming, solar-powered cameras identify issues locally without draining power on constant video streaming.

Always profile power consumption throughout development. Design systems that stay mostly asleep. Build in safety mechanisms for unexpected conditions. Keep security in mind with secure boot processes and careful data handling. Start small, validate thoroughly, and expand gradually.

Document your choices and measurements. Test under realistic long-term conditions rather than short demos. These habits separate successful production deployments from experimental projects.

Looking Ahead at Emerging Possibilities

New specialized chips, improved low-precision methods, and better update techniques continue expanding what edge devices can achieve. Privacy-focused training approaches and sustainable design principles gain importance.

Communities and open resources help developers stay current, but always verify new ideas in your specific setup. The fundamentals of careful optimization and realistic testing remain constant even as tools evolve.

Final Thoughts on Creating Effective Edge AI Solutions

Deploying low-power AI on IoT edge devices demands thoughtful planning and persistent testing, but the benefits prove worth the effort. You gain faster responses, reduced operating costs, stronger privacy protection, and devices that work reliably for extended periods.

Begin with a straightforward prototype using accessible boards and tools. Learn from each iteration and scale what succeeds. These methods help build practical intelligent systems that solve real problems in homes, industries, and beyond.

Stay curious, experiment regularly, and focus on delivering value through efficient, dependable technology. With the right approach, anyone can develop edge AI solutions that perform consistently in demanding real-world conditions.

Mahi

Hello friends! My name is Mahi and I am the Founder & Writer of Studynumberone.Net. I write content related to Ai, Technology, Make Money, and Education. Hope you like my Articles!

Brain-Computer Interface (BCI) Everyday Use Cases: Practical Neuralink Alternatives for Real Life in 2026

Brain-Computer Interface : Hey folks, let me paint a picture for you. Picture sitting on your couch after…

Read MoreBest Open-Source AI-Native Development Platforms in 2026: How They Fully Automate Software Development with AI

Open-Source AI-Native Development Platforms: Hey there, if you’re a developer, startup founder, or engineering leader in the US…

Read MoreHow to Integrate AI Agents with Existing CRM and ERP Systems: A Practical Guide to Unlocking Agentic AI’s Real-World Power

How to Integrate AI Agents : If you run a business in the United States today—whether it’s a…

Read MoreHow to Develop Sovereign AI (Country-Specific AI) for India in 2026: A Complete Step-by-Step Guide to Building Independent AI Infrastructure, Models, and Ecosystem

How to Develop Sovereign AI : Imagine a future where India doesn’t just use AI — it owns…

Read More